Entanglement Distribution Delay Optimization in Quantum Networks with

Distillation

- Mahdi Chehimi

- Kenneth Goodenough

- Walid Saad

- Don Towsley

- Tony X. Zhou

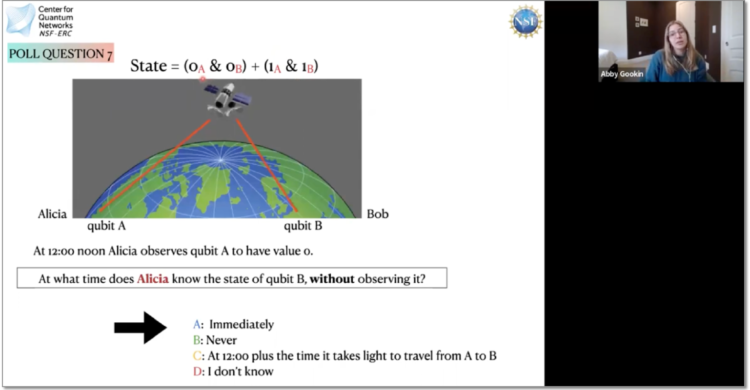

Quantum networks (QNs) distribute entangled states to enable distributed

quantum computing and sensing applications. However, in such QNs, quantum

switches (QSs) have limited resources that are highly sensitive to noise and

losses and must be carefully allocated to minimize entanglement distribution

delay. In this paper, a QS resource allocation framework is proposed, which

jointly optimizes the average entanglement distribution delay and entanglement

distillation operations, to enhance the end-to-end (e2e) fidelity and satisfy

minimum rate and fidelity requirements. The proposed framework considers

realistic QN noise and includes the derivation of the analytical expressions

for the average quantum memory decoherence noise parameter, and the resulting

e2e fidelity after distillation. Finally, practical QN deployment aspects are

considered, where QSs can control 1) nitrogen-vacancy (NV) center SPS types

based on their isotopic decomposition, and 2) nuclear spin regions based on

their distance and coupling strength with the electron spin of NV centers. A

simulated annealing metaheuristic algorithm is proposed to solve the QS

resource allocation optimization problem. Simulation results show that the

proposed framework manages to satisfy all users rate and fidelity requirements,

unlike existing distillation-agnostic (DA), minimal distillation (MD), and

physics-agnostic (PA) frameworks which do not perform distillation, perform

minimal distillation, and does not control the physics-based NV center

characteristics, respectively. Furthermore, the proposed framework results in

around 30% and 50% reductions in the average e2e entanglement distribution

delay compared to existing PA and MD frameworks, respectively. Moreover, the

proposed framework results in around 5%, 7%, and 11% reductions in the average

e2e fidelity compared to existing DA, PA, and MD frameworks, respectively.